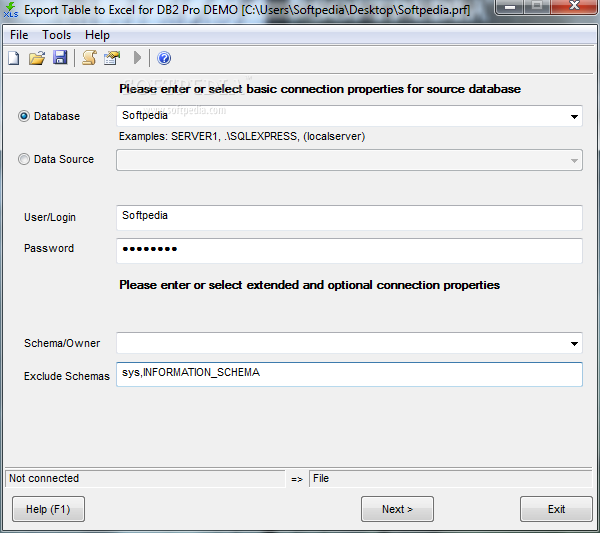

This is an abomination of a hack, and it made me cackle a lot. I created a new dedicated GitHub repository, simonw/til-db, and updated my action to store the binary file in that repo-using a force push so the repo doesn’t need to maintain unnecessary version history of the binary asset. The best place to put this would be an S3 bucket, but I find the process of setting up IAM permissions for access to a new bucket so infuriating that I couldn’t bring myself to do it. I needed a reliable way to store that 5MB (and probably eventually 10-50MB) database file in between runs of my action. The addition of the binary screenshots drove the size of the SQLite database over 5MB, so the part of my script that retrieved the previous database no longer worked. the site is hosted on Vercel, and Vercel has a 5MB response size limit.Įvery time my GitHub build script runs it downloads the previous SQLite database file, so it can avoid regenerating screenshots and HTML for pages that haven’t changed. # Now use puppeteer screenshot to generate a PNG proc2 = subprocess. # Use datasette to generate HTML proc = subprocess. til/til/python_debug-click-with-pdb.md page_html = str( TMP_PATH / "generate-screenshots-page.html") My generate_screenshots.py script handles this, by first shelling out to datasette -get to render the HTML for the page, then running puppeteer to generate the screenshot. So I reworked it to generate the screenshots inside the GitHub Action as part of the build script, using puppeteer-cli. but when a new article is added it’s not live when the build process works, so the generated screenshot is of the 404 page. I ran into a showstopper bug when I realized that the screenshot process relies on the page being live on the site. They’re pretty small-on the order of 60KB each-so I decided to store them in my SQLite database itself and use my datasette-media plugin (see Fun with binary data and SQLite) to serve them up. I needed to store the generated screenshots somewhere.

It’s pretty resource intensive, so I also added a secret ?key= mechanism so only my own automation code could call my instance running on Vercel. Since the example was MIT licensed I created my own fork at simonw/puppeteer-screenshot and updated it to work with the latest Chrome.

The example isn’t there any more, but I found the original pull request that introduced it. I remembered seeing an example of how to do this in the Vercel documentation a few years ago. My first attempt was to run Puppeteer in an AWS Lambda function on Vercel. The best way I know of programatically generating screenshots is to use Puppeteer, a Node.js library for automating a headless instance of the Chrome browser that is maintained by the Chrome DevTools team. So I decided to generate screenshots of the pages and use those as the 2x1 social media card images. One catch: my TILs aren’t very image heavy. I wanted them for articles on my TIL website since I often share those via Twitter. I really like social media cards- og:image HTML meta attributes for Facebook and twitter:image for Twitter. We’re now running that command as part of the Rocky Beaches build script, and committing the latest version of the YAML file back to the GitHub repo (thus gaining a full change history for that data). Sadly the Airtable API doesn’t yet provide a mechanism to list all of the tables in a database (a long-running feature request) so you have to list the tables yourself. This will create a folder called out/ with a. You run it like this: airtable-export out/ mybaseid table1 table2 -key=key So I built airtable-export, a command-line script for sucking down all of the data from an Airtable instance and writing it to disk as YAML or JSON. Natalie wanted to use Airtable to maintain the structured data for the site, rather than hand-editing a YAML file. Rocky Beaches is powered by Datasette, using a GitHub Actions workflow that builds the site’s underlying SQLite database using API calls and YAML data stored in the GitHub repository. It’s a new website built by Natalie Downe that showcases great places to go rockpooling (tidepooling in American English), mixing in tide data from NOAA and species sighting data from iNaturalist.

I wrote about Rocky Beaches in my weeknotes two weeks ago. This week I figured out how to populate Datasette from Airtable, wrote code to generate social media preview card page screenshots using Puppeteer, and made a big breakthrough with my Dogsheep project. Weeknotes: airtable-export, generating screenshots in GitHub Actions, Dogsheep!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed